Box Turtle Bulletin

News and commentary about the anti-gay lobby

News and commentary about the anti-gay lobby News and commentary about the anti-gay lobby

News and commentary about the anti-gay lobbySeptember 17th, 2007

Last Thursday, Stanton Jones and Mark Yarhouse announced the results of their new ex-gay study at a press conference in Nashville, where the American Association of Christian Counselors was holding its annual conference. The study, Ex-Gays? A Longitudinal Study of Religiously Mediated Change in Sexual Orientation will be published by InterVarsity Press October 10. This review is based on a thirteen-page synopsis that was provided for the AACC.

This study was funded by Exodus, with Jones and Yarhouse promising that “we would be reporting publicly the results of our outcome study regardless of how encouraging or embarrassing Exodus might find those results.” Based on Exodus’ press release and Alan Chambers’ presence at the press conference, it appears that Exodus is quite pleased with the study. Exodus, by the way, chose that same weekend to host their regional conference in Nashville, making for a very well coordinated event.

According to Jones and Yarhouse, their study was intended to answer two questions: Is change in sexual orientation possible, and are attempts to change harmful? And to answer those questions, they set out to do something that hadn’t been done before. They constructed what’s called a longitudinal or prospective (i.e. forward-looking) study, where they followed a population of study participants as they were beginning their experience with ex-gay ministries and continued to follow them over a period of four years.

This is an important feature of the study. One of the many criticisms for Robert Spitzer’s 2003 ex-gay study was that it was a retrospective (i.e., backwards-looking) study. In other words, study participants were asked to remember back to before they began their attempts to change and report from memory their sexual orientation and attractions. Jones and Yarhouse chose to conduct a prospective study instead:

In contrast to retrospective methods that ask participants to remember change experiences that happened in their pasts, a prospective methodology begins assessment when individuals are starting the change process and assesses them as the results unfold.

Because it’s longitudinal, beginning as the ex-gay participants begin their own journey, there’s no reliance on possibly faulty memories when asked, “What were your attractions several years ago when you started?”

When the study began, participants undertook a number of interviews face-to-face. This was at Time 1. There were two more interviews, Time 2 and Time 3, with the span between Time 1 and Time 3 being between thirty months to four years. Most of the Time 2 interviews were conducted face to face (15% were over the phone) and all of the Time 3 interviews were done over the phone. For all three, Jones and Yarhouse used a number of recognized, standardized measures for sexuality and mental health, with the crucial self-reports of sexuality being conducted via mail-in questionnaires.

Another weakness with Robert Spitzer’s study was that he didn’t use any standard measures for sexual orientation. He didn’t use the Kinsey scale (where 0= completely heterosexual and 6 = completely homosexual), nor did he use the Shively-DeCecco scales (which separates the intensity of homosexual and heterosexual attractions on two separate scales for independent measurement, with the zero axis representing perfect asexuality). Jones and Yarhouse used both sets of scales for their analysis, using commonly recognized standardized questionnaires to determine ratings at Time 1, Time 2 and Time 3.

Probably the weakest link in the design was in relying on self-reports for assessing sexual orientation instead of physiological measures of arousal. They addressed those criticisms this way:

Psychophysiological measures assess sexual arousal and orientation by attaching sensors to the genitals of subjects and measuring sexual arousal while the subjects watch pornography. We judged these methods as pragmatically impossible given the dispersed nature of our sample and the limitations of our funding, as morally unacceptable to the bulk of our research participants, and as not justified in light of current research challenging the reliability and validity of the methods themselves.

These are all legitimate objections as far as penile and vaginal plethysmography are concerned. There are however new emerging technologies involving MRI’s which may be useful for future studies.

While Jones and Yarhouse’s study appears to be very well designed, it quickly falls apart on execution. The sample size was disappointingly small, too small for an effective retrospective study. They told a reporter from Christianity Today that they had hoped to recruit some three hundred participants, but they found “many Exodus ministries mysteriously uncooperative.” They only wound up with 98 at the beginning of the study (72 men and 26 women), a population they describe as “respectably large.” Yet it is half the size of Spitzer’s 2003 study.

Jones and Yarhouse wanted to limit their study’s participants to those who were in their first year of ex-gay ministry. But when they found that they were having trouble getting enough people to participate (they only found 57 subject who met this criteria), they expanded their study to include 41 subjects who had been involved in ex-gay ministries for between one to three years. The participants who had been in ex-gay ministries for less than a year are referred to as “Phase 1” subpopulation, and the 41 who were added to increase the sample size were labeled the “Phase 2” subpopulation.

This poses two critically important problems. First, we just saw Jones and Yarhouse explain that the whole reason they did a prospective study was to reduce the faulty memories of “change experiences that happened in their pasts” — errors which can occur when asking people to go back as far as three years to assess their beginning points on the Kinsey and Shively-DeCecco scales. This was the very problem that Jones and Yarhouse hoped to avoid in designing a prospective longitudinal study, but in the end nearly half of their results ended up being based on retrospective responses.

This diluted the very purpose of doing a longitudinal study, and as Jones and Yarhouse describe it, this also clearly affected the results:

We expected that the results of change would be somewhat less positive in this group (phase 1), as individuals experiencing difficulty with change would likely be somewhat less positive in this group, as individuals experiencing difficulty with change would be likely to get frustrated or discouraged early on and drop out of the change process. We were able to retain these Phase 1 subjects in our study at the same rate as the whole population, and indeed found that change results from them were a bit less positive.

Left unsaid but clearly implied is the second problem with adding Phase 2 participants. Since they had already hung in there for between one and three years, that subpopulation would not have included those who entered ex-gay ministries at the same time they did but who were discouraged early on and dropped out. It’s no wonder the change results for Phase 1 were less positive than Phase 2. There’s no indication how “less positive” those results were, not in this synopsis anyway. Hopefully the book will break these numbers out.

But in the synopsis at least, the study’s results appear to combine Phase 2 and Phase 1 participants, which represents an unacceptable mixing of prospective (Phase 1) and retrospective (Phase 2) participants. And since the Phase 2 participants make up nearly half the total sample, this ruins any chance of this being a truly prospective study.

Whenever a longitudinal study is being conducted over a period of several years, there are always dropouts along the way. This is common and to be expected. That makes it all the more important to begin the study with a large population. Unfortunately, this one wasn’t terribly large to begin with; it started out at less than half the size of Spitzer’s 2003 study. Jones and Yarhouse report that:

Over time, our sample eroded from 98 subjects at our initial Time 1 assessment to 85 at Time 2 and 73 at Time 3, which is a Time 1 to Time 3 retention rate of 74.5%. This retention rate compares favorable to that of the best “gold standard” longitudinal studies. For example, the widely respected and amply funded National Longitudinal Study of Adolescent Health (or Add Health study reported a retention rate from Time 1 to Time 3 of 73% for their enormous sample.

The Add Health Study Jones and Yarhouse cite began with 20,745 in 1996, ending with 15,170 during Wave 3 in 2001-2002. But this retention rate of 73% was spread over some 5-6 years, not the three to four years of Jones and Yarhouse’s study.

What’s more, the Add Health study undertook a rigorous investigation of their dropouts (PDF: 228KB/17 pages) and concluded that the dropouts affected their results by less than 1 percent. Jones and Yarhouse didn’t assess the impact of their dropouts, but they did say this:

We know from direct conversation that a few subjects decided to accept gay identity and did not believe that we would honestly report data on their experience. On the other hand, we know from direct conversations that we lost other subjects who believed themselves healed of all homosexual inclinations and who withdrew from the study because continued participation reminded them of the very negative experiences they had had as homosexuals. Generally speaking, as is typical, we lost subjects for unknown reasons.

Remember, Jones and Yarhouse described those “experiencing difficulty with change would be likely to get frustrated or discouraged early on and drop out of the change process.” And so assessing the dropouts becomes critically important, because unlike the Add Health study, the very reason for dropping out of this study may have direct bearing on both questions the study was designed to address: Do people change, and are they harmed by the process? With as much as a quarter of the initial population dropping out potentially for reasons directly related to the study’s questions, this missing analysis represents a likely critical failure, one which could potentially invalidate the study’s conclusions.

Jones and Yarhouse describe their sample’s representativeness in contradictory and confusing terms:

Our study examines a representative sample of the population of those in Exodus seeking sexual orientation change. We cannot be absolutely certain of perfect representativeness, since no scientific evidence exists for describing the parameters of such representativeness. Still, we are confident that our participant pool is a good snapshot of those seeking help from Exodus.

Did you get that? It’s representative, but they can’t prove it. But they’re confident anyway.

When researchers make a sweeping statement, especially one as important as representativeness, they bear the burden of providing evidence to support their claim. If they can’t do that, then they must instead caution that their sample may not be representative and list the reasons why. I don’t think a respected peer-reviewed journal would let Jones and Yarhouse get by with claiming representativeness with nothing to substantiate that claim.

Their synopsis doesn’t describe how members were recruited into the study, so we can’t judge what selection biases may occur during recruitment. (I’m sure the book addresses some of this — I’d be shocked if it didn’t.) Nor do they discuss how their demographics might compare with other measures for ex-gay ministry participant populations. There are conferences, rosters, or simple surveys of ex-gay ministry leaders that they could have culled and compared their demographic data with.

But they didn’t appear to have done this, not according to the synopsis anyway.

But I think at least one demographic variable they provided is ample evidence that their sample is not representative. For example, they said that the average age of their sample was 37.50 years. Having asserted that their sample was “fairly representative,” they extrapolated that to the entire Exodus population this way:

The average age was older than we had expected, and its significance should be underscored. There is an unflattering caricature that Exodus groups appeal primarily to young, naïve, confused and sexually inexperienced individuals.

In this statement, Jones and Yarhouse appear to be more interested in defending Exodus’ reputation than in defending their own sample. But when I attended the Exodus Freedom Conference in Irvine California in June 2007, I got the distinct impression that the average age of the 800 participants was well under 37 years — perhaps even under thirty. The median age of “strugglers” was certainly close to thirty. The conference audience definitely skewed quite young.

We should keep in mind that Jones and Yarhouse limited their study sample to those over eighteen; their youngest participant ended up being twenty-one. Exodus, on the other hand, allowed registrants for their annual conference to be as young as thirteen, although I don’t think I saw anybody that young there. I did see a large number of teenagers, and an extraordinary number of young people, largely under thirty. Exodus had special programs set aside at that conference for younger people which old fogies like me weren’t allowed to attend. Exodus even operates an entire ministry called Exodus Youth, headed by Scott Davis, which specifically targets young people of high school and junior high ages. The Love Won Out ex-gay conferences also conduct several workshops for youth. And again, some of these workshops are closed to older adults.

Jones and Yarhouse may have had good ethical and methodological reasons for limiting their study to those above the age of eighteen. There are issues of informed consent, and questions would undoubtedly arise as to whether youth who are still under their parents direction would feel free to answer questions truthfully. But by limiting the study to those above the age of eighteen, Jones and Yarhouse guaranteed that their study would not be representative of Exodus participants overall.

As Timothy Kincaid already reported, the breakdown of the quantitative results went this way:

Keep in mind however that these results mix the truly prospective participants (Phase 1 participants who who began the study during their first year in Exodus ministries) and the retrospective participants (Phase 2 participants who had been in ministries for between one and three years). We don’t know what the mix of these two subpopulations are in the results. Since Jones and Yarhouse already stated that reported change from the prospective phase 1 group were “a bit less positive,” we know the results aren’t the same. But unless we understand how Phase 1 fared, we don’t know how mixing in people who were asked what their beginning orientation was retrospectively affected the results.

These results were derived using standardized measures using Kinsey and Shively-DeCecco scales. And the the Shively-DeCecco scales (remember, this separates homosexual attraction and heterosexual attractions on two separate axis), revealed something particularly interesting:

Changes on the Shively and DeCecco ratings for all three of our analysis followed a stable pattern… We see that change away from homosexual orientation are consistently about twice the magnitude of changes toward heterosexual orientation. It would appear, then, that while change away from homosexual orientation is related to change toward heterosexual orientation, the two are not identical processes. The subjects appear to more easily decrease homosexual attraction than they increase heterosexual attraction. [Emphasis in the original]

In many ways this confirms what many opponents of ex-gay therapy have noted, that attempting to change sexual orientation does not necessarily make someone straight. In fact, this particular finding makes it all the more unlikely, and puts into context Jones and Yarhouse’s characterization of success as “satisfactory, if not uncomplicated, heterosexual adjustment.”

This also, I think, goes a long way toward describing something else. It is often assumed that those who reported the most change were probably bisexual to some degree when starting the change process. To test that theory, Jones and Yarhouse created a subpopulation from their sample that, for want of a better term, they dubbed “The Truly Gay”:

… [T]o be classed as truly gay, subjects must have reported above average homosexual attraction and reported homosexual behavior and reported past embraced of a gay identity. We would emphasize that these were much more rigorous standards than are typically employed in empirical studies to classify research subjects as homosexual. Using this method, 45 out of our total 98 subjects were classed as “Truly Gay,” just less than half the population sample. We expected that the results of change for the Truly Gay subpopulation would be less positive, as they individuals would be those more set and stable in their sexual orientation. This is not what we found. Rather the change reported by the Truly Gay subpopulation was consistently stronger than that reported by others.

It’s unclear to me what they meant by “above average homosexual attraction” in their definition for the “Truly Gay.” Most researchers consider only Kinsey 5’s and 6’s to be “truly gay.” It’s not clear that this is what Jones and Yarhouse did here. By saying “above average homosexual attraction,” do they mean above average for this sample? If so, what was that cut-off? Maybe the book will clear things up. We’ll see.

But let’s assume for a moment that their criteria is valid, and let’s look at this in light of what they noticed about change to begin with: A change away from homosexual attractions at a rate that is about twice the rate of change toward heterosexual attraction. When looked at it this way, it is possible that the “stronger change” for the “Truly Gay” subpopulation was possible simply because there was a greater potential travel along the Kinsey or Sively-DeCecco scales to begin with; many bisexuals would have begun their attempts to change already partway down those paths. And since overall, the best functioning was “satisfactory, if not uncomplicated, heterosexuality,” it appears that for both groups, there was a finite limit short of Kinsey 0 or 1 that few in either group approached.

So far, we’ve talked about statistical measures of change based on Kinsey and Sively-DeCecco scales. Jones and Yarhouse also described some qualitative analysis, based on open-ended questions about participants’ attractions, experiences and identity. Those results were:

To further understand what all this means, it would be important to know how the dropouts might have affected these results. As we mentioned earlier, the Adolescent Health study (which Jones and Yarhouse upheld as a “gold standard”) made a concerted effort to understand how their dropouts might have affected the results. In doing so, they discovered that fewer than 5% dropped out because of refusal to continue. With that and other information at hand, they were able to determine that their dropouts affected the results by no more than a single percentage point.

Jones and Yarhouse appear to show no similar curiosity, and this represents a very significant failing of their study. In fact, the dropouts might have contributed very significantly towards higher “failure” numbers. But since Jones and Yarhouse appear to be incurious to find out more about this group, we are left in the dark.

Jones and Yarhouse administered the System Check List-90-Revised (SCL), which they describe as “a respected measure of psychological distress that is often used to measure the effects of psychotherapy.” They report no difference in the SCL scores from Time 1 to Time 3 when compared to others who are undergoing outpatient counseling.

But again, what about the dropouts? Did they report higher SCL scores at Time 1 or Time 2 before dropping out? We don’t know, not from the synopsis anyway. Again, maybe the full book will provide more details. But without this critical information to understand how the dropouts might have affected the results, Jones and Yarhouse cannot confidently conclude that attempting to change produced no harm. At best, they can only conclude that there was no greater degree of distress among those who continued ex-gay therapy when compared to mentally distressed persons undergoing psychological counseling for other issues — and by the way, is that really a legitimate comparison? I think it’s debatable. At any rate, where they chose to look, there was no problem. Where they chose not to look, who knows?

From Jones and Yarhouse’s synopsis of their study, I have a few more questions than answers. Hopefully I’ll get a copy of the full report in a few days. If so, I’ll post a more complete review as time permits. Until then, consider this review a preliminary one.

I’d have to say that I was very impressed with the study’s design, and very disappointed in its execution. Seventy-two participants out of Alan Chambers much-repeated “thousands” or “tens of thousands” doesn’t impress me much. I’m especially disappointed with these particular weaknesses:

We’ve waited quite a long time for a better study than Robert Spitzer’s 2003 effort. This study held great promise based on its initial design, but its conduct left much to be desired. Its rigorous design was not matched by similar rigor in execution. And so we’re still left waiting for that definitive breakthrough ex-gay study. I don’t think this one is it.

Update: Stanton Jones Responds

Latest Posts

Featured Reports

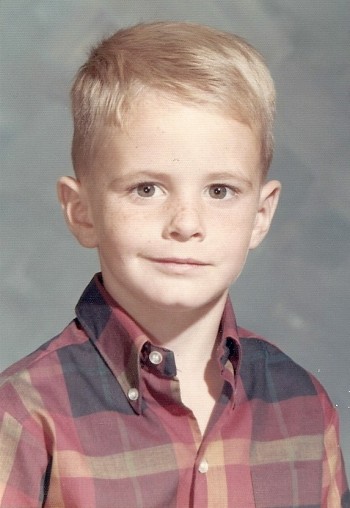

In this original BTB Investigation, we unveil the tragic story of Kirk Murphy, a four-year-old boy who was treated for “cross-gender disturbance” in 1970 by a young grad student by the name of George Rekers. This story is a stark reminder that there are severe and damaging consequences when therapists try to ensure that boys will be boys.

In this original BTB Investigation, we unveil the tragic story of Kirk Murphy, a four-year-old boy who was treated for “cross-gender disturbance” in 1970 by a young grad student by the name of George Rekers. This story is a stark reminder that there are severe and damaging consequences when therapists try to ensure that boys will be boys.

When we first reported on three American anti-gay activists traveling to Kampala for a three-day conference, we had no idea that it would be the first report of a long string of events leading to a proposal to institute the death penalty for LGBT people. But that is exactly what happened. In this report, we review our collection of more than 500 posts to tell the story of one nation’s embrace of hatred toward gay people. This report will be updated continuously as events continue to unfold. Check here for the latest updates.

In 2005, the Southern Poverty Law Center wrote that “[Paul] Cameron’s ‘science’ echoes Nazi Germany.” What the SPLC didn”t know was Cameron doesn’t just “echo” Nazi Germany. He quoted extensively from one of the Final Solution’s architects. This puts his fascination with quarantines, mandatory tattoos, and extermination being a “plausible idea” in a whole new and deeply disturbing light.

On February 10, I attended an all-day “Love Won Out” ex-gay conference in Phoenix, put on by Focus on the Family and Exodus International. In this series of reports, I talk about what I learned there: the people who go to these conferences, the things that they hear, and what this all means for them, their families and for the rest of us.

Prologue: Why I Went To “Love Won Out”

Part 1: What’s Love Got To Do With It?

Part 2: Parents Struggle With “No Exceptions”

Part 3: A Whole New Dialect

Part 4: It Depends On How The Meaning of the Word "Change" Changes

Part 5: A Candid Explanation For "Change"

At last, the truth can now be told.

At last, the truth can now be told.

Using the same research methods employed by most anti-gay political pressure groups, we examine the statistics and the case studies that dispel many of the myths about heterosexuality. Download your copy today!

And don‘t miss our companion report, How To Write An Anti-Gay Tract In Fifteen Easy Steps.

Anti-gay activists often charge that gay men and women pose a threat to children. In this report, we explore the supposed connection between homosexuality and child sexual abuse, the conclusions reached by the most knowledgeable professionals in the field, and how anti-gay activists continue to ignore their findings. This has tremendous consequences, not just for gay men and women, but more importantly for the safety of all our children.

Anti-gay activists often charge that gay men and women pose a threat to children. In this report, we explore the supposed connection between homosexuality and child sexual abuse, the conclusions reached by the most knowledgeable professionals in the field, and how anti-gay activists continue to ignore their findings. This has tremendous consequences, not just for gay men and women, but more importantly for the safety of all our children.

Anti-gay activists often cite the “Dutch Study” to claim that gay unions last only about 1½ years and that the these men have an average of eight additional partners per year outside of their steady relationship. In this report, we will take you step by step into the study to see whether the claims are true.

Tony Perkins’ Family Research Council submitted an Amicus Brief to the Maryland Court of Appeals as that court prepared to consider the issue of gay marriage. We examine just one small section of that brief to reveal the junk science and fraudulent claims of the Family “Research” Council.

The FBI’s annual Hate Crime Statistics aren’t as complete as they ought to be, and their report for 2004 was no exception. In fact, their most recent report has quite a few glaring holes. Holes big enough for Daniel Fetty to fall through.

The FBI’s annual Hate Crime Statistics aren’t as complete as they ought to be, and their report for 2004 was no exception. In fact, their most recent report has quite a few glaring holes. Holes big enough for Daniel Fetty to fall through.

Warren Throckmorton

September 17th, 2007

Jim – While I agree that you have highlighted some flaws in the work, I think it is light years beyond any prior snapshot in time. You will have some of your questions addressed when you get the book as there is much detail there. The study is not perfect but if widespread harm and very little benefit was happening out there, this design would have captured it. Actually, it points out that complete change is infrequent but that for those who continue, they feel benefit. I do agree that it would be good to know what the drop outs think. However, when the drop outs refuse to answer, I do not believe we can fault the researchers.

Jason

September 17th, 2007

Not assessing the dropouts is quite similiar to not including those who died in data on the effectiveness of a cancer treatment.

I don’t believe this design would have captured any indication of harm as the researchers didn’t follow-up with the dropouts.

And you are incorrect, it points that complete change does NOT happen. Remember, the “successful” reported a reduction, not an elimination or absence of homosexual desires.

It reminds me in college of people who would say “Oh, I quit smoking, except when i’m drinking, and first thing in the morning.” They reduced their smoking, but they did not actually quit.

We can certainly fault the researchers for not bothering to follow up with the drop-outs, which seems to be the case here.

Warren Throckmorton

September 17th, 2007

When you attempt to secure their involvement but they fail to provide data, I am not sure what you would suggest.

jimmy

September 17th, 2007

74 individuals. Hmmm, I wonder if there are more people out there who tried / are trying the whole ex-gay thing? Jerkish sarcasm aside, I don’t think the definition of success used here is honest. If you are still attracted to dudes, and you have no attraction to women (and you are a guy) that means you’re gay. This should be a no brainer, but for some reason it’s not. Since it’s a question of sexual orientation and not self-perception – those folks should not really be counted as successes. I guess this is predicated on the viewpoint that a homosexual orientation is expressed unambiguously physically – that it’s not just a way of thinking about yourself. In that way, for a study like this, the researchers are just going to have to pony up and get the participants to watch a little porn. It’s a matter of being an adult doing a serious study. This is risqué yes, but if you want a physical measurement of what the body does it’s necessary. No one is saying that you must think porn is morally acceptable – just that you have to stimulate an involuntary response somehow that will give a true indication about the literal state of the subject’s sexual attractions. Any old scared-s***less southern Baptist 20-something is going to tell his pastor and all his friends that he is “liking women more every day” as long as they hold social and spiritual capitol over the guys head. This should be obvious shouldn’t it? There’s no way you are getting honest answers because no one feels / experiences this thing objectively.

Wayne Besen

September 17th, 2007

One often wonders if Dr. Throckmorton is a sociopath. The ease in which this man consistently overlooks or minimizes the enormous pain, guilt and suffering caused by these groups is astounding. The sad part is, he has read enough about these groups to know better. And, his own miserable, if non-existent – success rate (where are the success stories?) as a therapist is even more reason to reject ex-gay therapy.

I can understand that Warren desperately wants the irrational parts of his religion to be right – but it simply is not so on gay issues. His belief system is dead wrong, anathema to sound mental health and it is causing suicides, depression and all sorts of unhealthy behaviors. It is causing families to break up.

Warren – it is time for you to just admit what you know in your heart – attempts to change are doomed to failure and hurt people. Period.

Why don’t you do the right thing already?

Jim Burroway

September 17th, 2007

Change may happen for some highly motivated people. It may not happen however for other highly motivated people. But at any case, this study did not support the idea of “complete change,” and Jones and Yarhouse were careful to point that out.

I understand the difficulty of following up people who don’t want to participate. I hope the book breaks down some of the reasons at least. I would also hope the book gives most recent Kinsey, Sively-DeCecco, and SCL scores for the dropouts to provide some sort of clue as to what’s going on.

I do believe that the study’s design is, as Warren says, “light years” ahead of anything done before. Maybe the book will give me more confidence. But mixing retrospective and prospective populations, in my view, negates some of the advantages of this study’s rather advanced design.

Ben in Oakland

September 17th, 2007

According to the article in Christianity Today, “Jones and Yarhouse conclude that their research is the most rigorous ever conducted on this subject.”

Rigorous seems to be open to question, since they had so much trouble getting participants, keeping people in the study, studying the people who chose not to participate, relied on less than 100 people, and ultimately seemed to conclude that no one had actually “changed”.

The only solid conclusions I can see are that 1) believing in Jeebus and giving money to ex-gay ministries probably accomplishes nothing 2) that ex-gay ministries do not accomplish what they say they do, as they have no methodology to accomplish it, no way of measuring the success they claim to have, and a whole bunch of people who would just rather not discuss it, or provide any objective proof that a change has actually occurred.

You are right, Mr. Throckmorton. But it truly begs the question. as jason pointed out, “Not assessing the dropouts is quite similiar to not including those who died in data on the effectiveness of a cancer treatment.” As Jimmy pointed out,”Any old scared-s***less southern Baptist 20-something is going to tell his pastor and all his friends that he is “liking women more every day†as long as they hold social and spiritual capital over the guys head.”

And has Ted Haggard has pointed out: just because you believe in Jeebus and have struggled against yourself for decades, and just because you now say you are 100% straight, doesn’t make that true.

Timothy Kincaid

September 17th, 2007

My biggest criticism of the study is that the conclusions do not address the hypotheses. Specifically, Yarhouse and Jones set out to find out “Is change in sexual orientation possible”, but look at the definitions of success – they do not address change in sexual orientation. Instead they address compliance with religious teachings.

Chastity is not an aspect of sexual orientation. It is, rather, a purely religious notion (setting it apart from celibacy which may also be secular). Yet the largest group of “successes” were labeled so solely because they established sexual behavior patterns that were consistent with teachings. This definition of success has no identifiable connection with the original question.

And Warren’s endorsement for the study also ignores this disconnect. Warren’s pleased that those who continue “feel benefit”. Perhaps, but this has little to no relevance to the question about orientation.

Jason

September 17th, 2007

I couldn’t agree more, timothy “Is change in sexual orientation possible?”

The data we’re presented with here suggest “no, but behavior is.” People can choose to be chaste, even experience a reduction in same sex desire, but no one in this study actually became straight by any fair and honest definition of the word.

Incidentally, Chastity and Celibacy are the equivalent of not playing, which is hardly the same as switching teams.

Lenny

September 17th, 2007

So I guess name calling is permitted here? Can I call Besen “Weird Wacky Wayne”?

Jim Burroway

September 17th, 2007

Technically, it wasn’t namecalling, but I see your point. It was a clever way around namecalling (“One often wonders if…”).

So I guess it might be safe it you were to say “One often wonders if Wayne were wierd and wacky.” But I’d prefer not to have to arbitrate these things.

So let’s try keep things within the spirit and the letter of our comments policy.

SharonB

September 17th, 2007

Check out how the RR press is covering this. Look at the pie-chart: http://www.bpnews.net/bpnews.asp?id=26429&ref=BPNews-RSSFeed0914

They are even calling the “Continuing” category as a success! Uh, they are continuing in Exodus because why? because they ARE NOT AS SUCCESSFUL AS THEY WOULD LIKE TO BE!

Lord, what liars these “kristians” be.

Also not the high degree of sexual compulsiveness in the study participants, especially the males.

Lenny

September 17th, 2007

Yes, one would hope adults can act like adults. Alas, some can’t contain themselves.

grantdale

September 17th, 2007

Jim, just a “minor” but actually very significant correction.

In the launch presentation, J&Y stated that:

“Our first hypothesis was that change of sexual orientation is impossible.” and “Our second hypothesis was that the attempt to change sexual orientation is harmful.”

(emphasis ours)

These two positions they (wrongly) claimed are the official positions of the professional bodies.

From that, they therefore did not set out to prove — and therefore nor did they — the questions “Is change in sexual orientation possible, and are attempts to change harmful?” (the wording that you used.)

(As we know you know all too well, and assuming J&Y didn’t misrepresent what hypothesis they actually used) these seemingly minor wording changes do make a considerable difference to the hypothesis testing etc.

The professional bodies official positions are that no evidence exists for the possibility (let alone long-term efficacy) of changing sexual orientation, and that they have concerns about possible harm that may occur in attempting to do so. These are both very open-ended statements, as one would expect.

Leaving aside why J&Y would construct such a straw-man argument in the first place — although that’s a sure sign of the very worst type of study design — it seems they have not, therefore, managed to refute the official statements from the professional bodies.

*** on an important side-note: what their study appears to actually also do is disprove the official Exodus positions that “…affirms reorientation of same sex attraction is possible.” and “[Homosexuality is a] disorder that can be overcome.”

Let’s use the study to test those statements and see where that takes us…

As bald statements about “change”, Exodus is wrong and/or misleading — proven by the very study that they paid for!

(nice job on this BTW Jim! As always.)

grantdale

September 17th, 2007

Warren, your seemingly dismissive (care-less?) lack of concern for the potential for harm continues to bewilder and concern us.

As we know has been put to you in the past, the harm will not and does not appear evident during the period when the mood of exgay participants is being buoyed by their hope of change.

It occurs after those false hopes have been dashed; as they overwhelmingly will be, although this many take several years.

This is a period of their lives that you do not get to see.

(Afterall, why would such a person engage a therapist who is a very public exgay apologist/advocate? They’ve got to the point of thinking “your side” has damaged them, afterall.)

The missing 25 are vital to understand this dynamic; as are the clinical experiences of your professional colleagues who warn of the potential for harm, as are the experiences of ex-exgays, and as as will be the futures of the majority of the study participants who are currently suspended in animation and holding out false hopes for change.

I realise these “failures” are an inconvenient blotch on the rosy picture you’d like to present, but at least a hint of some acknowledgement and concern is surely in order?

Lynn David

September 17th, 2007

The missing 25 are a dang big problem when you consider that the idea one drops out has merit.

This study is crap. Light-years ahead of the crap that came before it, but still crap. The numbers are meaningless and Jones & Yarhouse have basically even said so in the book.

If Exodus wants a truly good study why not hire a psychologist/psychiatrist to create and oversee a mandatory, analytical questionnaire of everyone who comes into an Exodus affiliate ministry. Two levels of questionnaire might be created, a less technical one for those who only attend ministerial activities which are groups or not overseen by a professional therapist. A second more technical one for those who do see a professional therapist. Both would be an administered questionnaire either by the ministerial leader or the individual professional therapist/psychologist/psychiatrist. This questionnaire would be administered upon entrance into an Exodus ministry and then again at a regular interval (1 year, 1-1/4 years, 1-1/2 years?).

There should also be an exit questionnaire which the Exodus ministry should endeavor to have a client answer failing that that person’s primary contact in the ministry should indicate whether the client had been “advancing” or “not.”

One might argue that this is what Exodus should have been doing all along as a “watch-dog” over itself and its member ministries. Harm often isn’t measured in the time of this study. Harm comes to some only after they realize their faith has cause a self-loathing which had forced them from place to place trying to get the gay out to no avail. Harm comes when you realize you screwed away your life doing something that wasn’t productive.

CPT_Doom

September 18th, 2007

My original concerns about this study certainly have not been answered by this analysis. Given the high drop-out rate and the low level of success (however defined), the lack of categorization of the actual “treatments” undergone by the participants is a huge flaw. We don’t know whether there were differences in the processes the participants underwent, or whether those differences could account for the results (or the drop-outs).

More importantly, I would argue, without any biological metrics, Jones and Yarhouse have not answered the question about whether sexual orientation change is possible. Because sexual orientation is, at its heart, a biological phenomenon, having absolutely no measurements, particularly given modern improvements in brain scanning, leaves a gaping hole in the entire endeavor.

Most importantly, there is no way these results should ever be used to address public policy issues, like the rights of gays and lesbians to live freely and wholely in this country. If only 11 participants (and how many of them were in the retrospective group?) achieved the type of functioning promised by Exodus and other groups, how can public policy be based on the illusion that gays and lesbians can/should change?

Benton

September 18th, 2007

The whole arguement that the process should continue because some “feel benefit” is spurious. If we used this metric, then we would have Morphine readily available to all. No worry about the future harm that can come from addiction, as long as some “feel benefit” then we should continue the treatment unregulated.

William

September 18th, 2007

The phrase “above average homosexual attraction” really intrigues me, and it certainly needs elucidation.

Does it perhaps mean those who are sexually attracted to anyone and everyone else who belongs to the same sex as themselves? If so, the existence of such people is, at best, highly questionable.

Maybe if I knew what “above average HETEROSEXUAL attraction” was, it would help me.

ARE you Kidding Me??

September 18th, 2007

It would be great if this research was conducted by a neutral party. They are both extremely biased which slants the study greaty.

Yarhouse is a professor at Regent university founded and headed by Pat Robertson. Regent also funds Yarhouses other gender project.

Jones has been quoted by saying homosexuality is destructive and

abnormal.

The research methodology used was self reporting which is the least reliable. Also the participants were provided directly from Exodus who also funded the project and paid the participants.

Whether you are for or against reparative therapy and ex-gays its so obvious that this research is unfounded and completely biased from the people that funded it through those who conducted it.

The issue or idea of Bisexuality is rarely discussed, or is the blatant evangelical position of the parties involve in the study.

Also please review the Policy statements of 10 medical and mental health professions to see just how destructive this therapy is.

http://www.apa.org/pi/lgbc/publications/justthefacts.html

Are we to ignore the collective efforts of these 10 associations for a short term study that admits the findings may change if the subjects are studied longer conducted on such biased grounds?

Does either of this sound like a study you can trust?

n8nyc

September 18th, 2007

One thing I missed. Does their methodolgy treat the dropouts as censored data? Isn’t censored data treated differently in prospective and retrospective studies? It would be great to know their full model.

Regan DuCasse

September 18th, 2007

Dr. Throckmorton,

Please define “light years ahead”. To all intents and purposes, the motive to address homosexuality is thousands of years old.

The bottom line: entrenchment of negativity towards homosexuality and using religious discipline in a discriminatory way against gay people. With the ultimate goal being, less gay people engaged in actually being gay.

It’s repackaged and less violent than in Biblical times, but it’s hard to take this study seriously when there aren’t any OTHER comparative studies done regarding what ‘highly motivated’ really means and where that motive originated.

Have you ever been interested in finding the ex gay ministry ‘failures’ or ‘drop outs’, who are otherwise successfully gay contraindicated by your standards or the standards of this study?

What about comparative negative behaviors in the heterosexual population

who have also submitted to religious discipline. Certainly the situation between T-Dub and Pam Ferguson is the more common situation where gays and lesbians had tried to be straight with all the public trimmings of being so, and couldn’t make it.

Are they included as how often this happens and tracked for long?

See, I AM a curious person. I have really worked hard at finding the data or someone willing to deal about this subject objectively and with a broad enough range of information.

So far, such information has fallen short, doctor. I’m still left wondering and the ex gay business side has still left me quite empty of anything very helpful.

The claims really aren’t squaring with the results.

I used to question homosexuality and it didn’t take much effort to get answers and honest dialogue.

However, when I started questioning the ex gay business for the last almost ten years is how I got around to YOU and Dr. Nicolosi. There is a Living Waters chapter in my neighborhood and the NARTH offices are just up the road.

Talk about stonewalls and cold reception.

The things that make you go hmmmmm….

This isn’t a first amendment issue. Not at all.

Ben in Oakland

September 18th, 2007

Regan’s observations actually suggest another question, and it is one that I hope that Throckmorton especially, as well as Nicolosi, and the authors of the study address. in fact, i think it would be a very interesting study.

Patrick ONeill

September 18th, 2007

Outcomes for Harm – that is not a legitimate measure.

A legitimate measure would be comparison to gyas who felt “troubled” by their orientation and received counselling to help them adjust and accept their orientation.

Ben in Oakland

September 18th, 2007

Regan’s observations actually suggest another question, or rather, A set of related questions, and it is one that I hope that Throckmorton especially, as well as Nicolosi, and the authors of the study, would address. And address here. In fact, I think it would be a very interesting study, and throw a great deal of light on the question these good “doctors” are studying.

Pardon my crudeness, but the crudeness is, I believe, the only way to arrive at the nature of the answer without any equivocation or dissembling. To wit: Why are YOU (and by extension, all of the evangelicals, conservatives, and so forth) so obsessed with what makes MY dick hard? Absent any complaints from me as GayEveryman, why are YOU so obsessed with changing what makes MY dick hard? Is this a sign of mental health, maturity, peacefulness? I don’t know anyone among my gay and straight friends who is interested in someone else’s sexual proclivities unless they are actually INTERESTED in those sexual proclivities– and I don’t mean academically. In the absence of any real evidence that homosexuality is harmful, pathlogical, dangerous, or anything else that it is supposed to be, why is it required that you change it, stop it, or anything else. How much money do you make out of “curing” what isn’t a disease, and does that have any bearing are your willingness to “cure” it?

We know from quantum physics and the social sciences that the observer is necessarily a part of and intimately connected with that which is observed. Where do you fit into that?

In short, why are you not studying homophobia and homoprejudice with the same fervour which you study the effectiveness of ex-gay therapy?

We know that homosexuality is not a disease or, per se, a mental or emotional impairment. (And were it not for the homophobes, I suspect the ex-gay therapists would not have jobs.) I am in a great relationship, I function at a high level, by all measures, I am mentally healthy in every way, as is just about every gay person I know and have known.

The reason the APA dropped homosexuality from its list of mental disorders was that there was absolutely no evidence that being gay is a mental disorder. They had a definition of mental disorder, but to make it stick for gay people they had to ignore their own definiton, and say that “Of course. Gay people are mentally disordered BY definiton. Just not THIS definiton.” It could not hold up to any kind of scientific scrutiny. The really homophobic psychiatrists, like Bieber and Soccarides (father of a gay son!!!), the ones who earned their living “curing” gay people, tried to force a referendum on the APA, but it also failed. The whole procedure underlined that prejudice was really the defining issue, not homosexuality, as is often the case on this particular issue. (Not surprisingly, religious reactions to gay people are very similar). First, a whole category of people is defined as mentally ill (or particularly sinful) with no scientific or experiential (or biblical) reason to do so, only a cultural and religious prejudice. They they have a vote, and presto-change-o, a whole category of people are “cured” overnight. Then, the people who whose livelihood depend on the the “mental illness” issue try to make another vote to make all of those people “sick” again. Clearly, not a matter of good science or good medicine, just prejudice. You might call it the politics of diagnosis.

The religious argument holds no more water than the scientific argument. There is a great deal of controversy about the meaning and the relevance of the biblical passages which purport to condemn homosexual acts. Churches are being torn apart by it. There is no actual TRUTH. There is no monolithic religious response to homosexuality any more than there is to any other religious issue, including the nature of god, his message to the vworld, whether he had a son, capital punishment, war, whether Islam is the last word from the former Midianite storm god known as YWWH, or how much he cares about what makes my dick hard.

I won’t go into all of the arguments here on either side of the religious question. They are not really relevant. There are many old testament passages that condemn many other activities, but this excites no one. There are lists of sinners in the new testament, but no one is starting ex-greedy people ministries, or ex-adultery ministries. There are ex-drunk ministires, but then alcoholics have an observable and verifiable problem.

In short, it really isn’t about religon either, though it certainly SOUNDS better to say it is about religious belief than it is to say it is about plain old prejudice.

This whole thing is not really about God’s alleged word. It is about prejudice, and nothing but prejudice, given a thin veneer of respectability by organized religion, right-wing politicians, and therapists who claim to cure but in fact, as the J&Y study shows fairly well, juts make money off of the situation.

Here’s how I know this. As a Jew, I reject the Christian story, and as a thinking human being, I reject so called Biblical morality, which any thinking person who has read the thing and thought about it can see is barely biblical certainly not moral. (Those babies whom god murdered in the flood were not sinners needing to be punished. They couldn’t commit a sin even if they wanted to. WHO really sinned here? Judging God by his own standards does not bode well for God).

My rejection of christianity bothers the religious beliefs of no one but the most rabid fundamentalist, nor would any but the most clueless dare say so in public for fear of rightly being called a religious bigot. But let me say that I’m gay, that I reject just this tiniest part of conservative Christian belief, and that i demand an end to the prejudice, and suddenly, religious beliefs are offended, any pretenses to logic, reason, science, or even theology (irony of ironies) are thrown out, letters to editor are written, right wing ministers and conversion therapists make a lot of money, and right-wing politicans get elected and make a lot of money as well.

So, will you answer my question?

It’s just sex, just not sex that you theoretically don’t enjoy. If you spend the same obsession on war, hunger, poverty, corruption, bigotry, religious intolerance, the environment, (some of which Jesus actually addressed) that you spend on screaming about our sex lives (which Jesus NEVER addressed, despite its paramount important to christo-hetero-supremacists) and denying us what you take for granted, what a better world this would be.

Stanton L. Jones

September 18th, 2007

Dear Mr. Burroway and the readers of the Box Turtle Bulletin:

Some brief reflections on your review (with a quick added note that we will not be responding much in this type of format; the demands are simply too great):

1. Above all else, thank you for a substantive and thoughtful preliminary review that actually engages the factual, scientific and intellectual issues of the study instead of engaging in the types of character assassination and other negative tactics of some commentators. While I obviously disagree with your conclusion that “we’re still left waiting for that definitive breakthrough ex-gay study. I don’t think this one is it,†you have nevertheless made clear the grounds on which you have made this judgment, have discussed those grounds rationally, and those grounds are areas of legitimate debate.

2. There seems to be considerable confusion about the book not being available until October. The book is in fact available now; it was released the same day as the paper presentation in Nashville. Quite a number of your questions are answered in the full book presentation; the paper is a sampling of the findings, with an emphasis in the paper (admittedly) on the clearer findings that led us to our conclusions. Now to four substantive issues you raise:

3. Psychophysiological measurement: You ask about our failure to use MRI technology. First, we report in this paper and book on the data gathered up to two years ago, and the MRI technology was unavailable then. Second, even today I would not use it. The “No Lie MRI†appears to be just the latest hyped and unproven version of the hope for a foolproof lie detector. A recent New Yorker article by Margaret Talbot (“Duped: Can Brain Scans Uncover Lies?,†July 2, 2007) presents a readable and thorough discussion of just how overly-hyped and poorly validated this new technology is. There is inadequate scientific basis to believe the “No Lie MRI†would be a suitable measure for our subjects.

4. Retention: You put quite a bit of emphasis on our drop-outs and chided our efforts to contact and assess drop-outs without benefit of reading our account of this in the book. You particularly compare us unfavorably to the Add Health Study. Remember that the Add Health Study was a study of adolescents with parental approval, so those researchers were remarkably advantaged in terms of being able to track down missing participants through their families. We had no such advantage with an adult population. We went to extreme lengths to keep people in the study, involving multiple pleas and contacts including those through families and friends, and, when we had contact information at all, with personal calls and pleas from me. At some point, you must respect people’s wishes not to be contacted. We remain proud of our retention rate in the study.

5. Representativeness of sample: You say “I think at least one demographic variable they provided [i.e., age] is ample evidence that their sample is not representative.†To the best of my knowledge, there is only one study that can come close to the claim of getting a representative sample in this area, and that is the Bailey, Dunne, & Martin Australian twin study (Journal of Personality and Social Psychology, 2000) that concluded that genetic factors were not a statistically significant contributor to causation; this is a great sample because it was an exhaustive sample of every twin born in Australia! At some level, the ultimate representativeness of all other samples is debatable. The evidence you cite in your dismissal is a good example of our difficulty: You state your impression that Exodus conferences draw a younger crowd while our sample age is older, and that therefore that we have a bad sample. But you have provided no justification that your impressions of Exodus conference ages are valid, nor stated the bases for your inference that conference attenders are in turn representative of those who seek help in local Exodus ministries. We do acknowledge that we cannot prove the representativeness of our sample, but have numerous reasons, cited in the book, for why we think we got something like a representative sample. And one final note: samples have to be adequate to the task of the study, and we discuss in the book the reasons why the sample is adequate to test an absolute hypothesis (that change is impossible) and a strong hypothesis (that the change attempt is often and decisively harmful).

6. Truly prospective: We explicitly discuss in the book the implications of including the Phase 2 group. I would soften your criticism of the retrospective implications of this group, because in contrast to the Spitzer study where subjects could be looking back over many years or decades to remember their prior experience, our Phase 2 group were looking back into their immediate past. More importantly, however, it is vital to note that while the average changes noted were less strong for the Phase 1 group, Phase 1 subjects were proportionally represented in all of our success categories, so an easy dismissal of significant changes in this group is simply not possible.

Again, it was a delight to read a thoughtful review of our study. No study is perfect. To argue that ours is the strongest study yet done in this area is not the same as to argue that it is exemplary or perfect. It is a stronger study than any available, one particularly suited to the hypotheses investigated.

Stanton L. Jones

Jim Burroway

September 18th, 2007

I’d like to thank Dr. Jones for leaving his thoughts on this.

If anyone wants to respond to Dr. Jones, please do so on the new thread I started. Thanks.

Ben in Oakland

September 18th, 2007

I don’t wish to respond to Dr. Jones, but I do wish he would respond to me. As well as Throckmorton, Yarmouth, and Nicolosi.

Why are you so interested? What is your particular agenda? How do justify that it is about science (the APA) or religion (the current imbroglio in the Episcopal church) when history has demonstrated clearly that it is about neither? How much money is at stake? How much power do you accrue? How many political concerns of other interested are addressed?

Why, when it was clear from the results of your study that actual, “uncomplicated” change from hetero to homo does not occur, at least by your methods, why do you advocate change, especially by your methods? My homosexuality, like the heterosexuality of my many straight friends, is very unequivocal and very uncomplicated. If the best that you can come up with are celibates and the “complicateds”, then I put it to you that you are leaving something not changed.

So please do address my issues.

Bob in Colo Sprgs

September 18th, 2007

I was saved, and became “a functioning heterosexual” for years. Married, children and able to push back from this slight but increasing temptation for men. After that followed a period of depression and guilt that helped fuel a complete questioning of Christian faith. When you are convinced that God will punish you, and that Satan is pushing these desires, it is possible to be a “functioning heterosexual.” But the relief I found by accepting myself as gay was far greater than anything I have gotten from religion. I also have great empathy for those who go through this ex-gay (self-torture) movement. I do not doubt that for three, five or ten years, that many of the “successes” will claim success — especially since their alternative is to consider themselves subject to God’s wrath. But to what extremes will they go if they finally reach a point where they admit they have been lying to themselves and everyone else?

I was lucky, and have the help I need — from some competent psychologists. And I do not doubt they saved my life.

NancyP

September 18th, 2007

It was mentioned above that Mark Yarhouse was a professor at Regent Univ., the conservative religious school founded by Pat Robertson.

Dr. Stanton Jones is a professor of psychology and also Provost at Wheaton University, the very well-known Evangelical school (probably characterizable as an “Ivy” among this type of schools). He has written a number of books on psychology from a Christian point of view, and a set of books on “God’s Design for Sex”.

Studies of this sort are difficult at best for the reasons noted, but surely it would be better to have investigators from neutral (non-religious) institutions, in part to reduce the discomfort of study participants and possibly increase the percentage with long-term followup. Written questionnaires with the usual yes/no/don’t know or scale 1-6 answer options, with some “extra” questions designed to test the instrument validity, would be a preferable method of gathering data once the individual has been entered into study and given a unique study number. People are more likely to be truthful about sensitive subjects on questionnaires than face-to-face or phone interviews. One can better ensure anonymity (separation of participant name and results) with such a method – study request done by investigator, (nonsequential)study number then assigned by a research assistant who does not see results on questionnaires but who does the send-out and receipt of questionnaires, study analysis done by investigator.

Just some thoughts.

grantdale

September 19th, 2007

NancyP, personally we see no reason (as such) to think that someone teaching at a Christian college is incapable of good research and honest reporting of their research. Wheaton or Regent even.

The problems arise if they attempted to publish or lecture or speak in public and it didn’t conform to the college’s social/religious expectations. They would be looking for another job.

It reflects the institutional environment, not the person, even though it would also be a safe bet that near most (all?) of those teaching at relatively undistinguished religion-based schools probably also agree with the institution’s policies etc. They’d tend to self-censor.

Of course, if the person actually does have a long and proven history of manipulative, one-sided “research”… it really doesn’t matter where they work: they’re not to be trusted at face value.

George Reckers, as example, Uni. South Carolina School of Medicine, previously at Harvard… secular, mainstream, trustworthy schools — but the man and his work certainly is none of those!

grantdale

September 19th, 2007

/sigh why do we keep doing that? Rekers.

TO GRANT DALE

September 19th, 2007

When research is funded by a right wing church that supports ex-gay conversion (exodus) or one of the professors of the study not only teaches at Regent but his other research project is funded by a University that is headed by Pat Robertson Don’t you think the study is tainted? It would be like HRC conducting the same project- lets not be ridiculous and ignore that fact.

Regent university is not above underhanded ways, need I remind people of the Regent Alumni Ashcroft/Monica Goodling scandal. Or you can just read about it here http://www.boston.com/news…puts_spotlight_on_christian_law_school/

The following are quotes from the researchers and Throckmorton and as far as Rekers is concerned he may be an intelligent man but that doesn’t mean he is not homophobic which is clearly illustrated by the quotes below.

THROCKMORTON

What then will be the strategy of gay political groups?

The courts will be tied up with this matter in the near term. And in the court of popular opinion, we can expect a prolonged media effort and much rhetoric from such groups and a sympathetic press about the crux of the issue being one of civil rights for the class of people known as homosexual. More emphasis than ever will be focused on studies that purport to demonstrate a genetic determination for sexual attraction, thus leading to claims of discrimination directed at this group.

Expect protracted legal, media and legislative battles. A generation of children will grow up with this issue. When it’s all over, if traditional marriage finally prevails, I expect we will look back and say the election of 2004 was a turning point in the effort to maintain marriage as a union of one man and one woman.

Throckmorton, Warren. “Whats next in the arena of Same Sex Marriage?:†Morning Call. 5 December 2004.

I am a social conservative….

Social conservatives are generally pro-life, pro-traditional marriage and take their religion as being pretty important in helping to frame their world view.

If the senator wants to win the social conservative guy vote, he needs to do a few things. First, he needs to drop his pledge to appoint only pro-choice justices to the federal bench. Second, he should indicate that his public policy on abortion will be consistent with his Catholic faith. Third, he needs to support legal protection for traditional marriage. And I am sure there are a few more.

Throckmorton, Warren. “Hunting the guy vote†The Washington Times. 31 October 2004.

David Fishback, chairman of the citizens advisory committee in Montgomery County that crafted the new curriculum, said Mr. Throckmorton thinks homosexuals are “sick and or … sinful.”

Ward, Jon “Sex-Ed Battles raging in regionâ€. Washington Times. 10 February 2005.

IF HE IS A PSYCHOLOGIST WHY IS THOCKMORTON SO OBSESSED WITH GAY MARRIAGE?

REKERS

George A. Rekers

Resigned from APA in Protest of deleting homosexuality from the Association’s compendium of psychiatric disorders.

—Called on by the Boy Scouts as an expert witness in the Roland D. Pool and Michael S. Geller v. Boy Scouts of America and the National Capital Area Council, Boy Scouts of America. (1998)

Whatever biological component there is to having homosexual urges, homosexual behavior is a “preference,” not an “orientation,” he said — in short, a matter of choice. It’s a choice Rekers clearly considers deeply wrong: As a Southern Baptist, he told the commission, he believes that God destroyed the city of Sodom for allowing homosexuality, as an example to mankind, and that active homosexuals face “eternal separation from God” — in Southern Baptist parlance, the fires of hell.- George Rekers

Thompson, Tracy. “Scouting New Terrain.†The Washington Post. Washinton, DC. 2 August 1998.

DOES REKERS SOUND LIKE SOMEONE WHOS CAPABLE OF AN UNBIASED VIEW?

YARHOUSE & JONES

STANTON L. JONES

Hours of debate at the Episcopal governing convention left dangling the issue of whether to sanction ordination of active homosexuals.

Psychologist Stanton L. Jones of Wheaton, Ill., said those who support ordaining homosexuals are trying to “to normalize a pattern which is destructive and abnormal.”

Cornwell, George. “Debate Over Sexuality Fails To Resolve Issue Of Ordaining Homosexualsâ€. Associated Press. 15 July 1991.

When Evangelical Colleges Turn Liberal

This murk yielded the occasional gem. Wheatons provost, Stanton Jones, deserves special mention for his incisive keynote refuting homosexualist ideology.

Walker, Graham. “When Evangelical Colleges Turn Liberal†The American Spectator. November 2005.

MARK YARHOUSE

Indeed, a devout Christian can decide that “Christ, or God, has a pre-existing claim on their sexuality†that trumps same-sex attractions, Yarhouse said.

Vegh, Steven “Some groups offering gays opportunities for “recoveryâ€. The Virginian-Pilot(Norfolk, VA.)14 September 2004.

JONES & YARHOUSE

Interview with Stanton Jones and Mark Yarhouse

Authors of Homosexuality: The Use of Scientific Research in the Church’s Moral Debate

How did this book come about?

We (Stan and Mark) have watched for years as the supposed “scientific evidence” has been used in the ethical/moral debates of the various Christian denominations over the divisive topic of homosexuality. The majority of the time, the “evidence” has been used against the traditional moral position that sees homosexual behavior as sin.

Why did you decide to focus on this particular topic?

…as evangelical Christians, it seemed to us that homosexuality is the area where more pressure is being put on the church to depart from the explicit moral teachings of scripture than any other area.

http://www.narth.com/docs/jonesyarhouse.html

20 April 2006

THEY ARE OBVIOUSLY VERY BIASED AS WELL.

YOU CAN ALL SAY WHAT YOU WANT BUT THE PROOF IS ABOVE. THESE PEOPLE HAVE A RIGHT WING AGENDA EVIDENT IN THE ARTICLES THEY WRITE AND WHAT THEY HAVE SAID>

WHAT IS SAD IS THAT THEY ARE RUINING PEOPLES LIVES. HOW MANY EX-GAY “SUCCESS”

STORIES HAVE TO COME OUT OF THE CLOSET, HOW MANY PEOPLE MUST COMMIT SUICIDE, HOW MANY MEDICAL AND MENTAL HEALTH ORGANIZATION HAVE TO OPPOSE THIS PHILOSOPHY BEFORE THEY STOP?

WHAT DO THESE PSYCHOLOGIST CARE IF THE GLBT COMMUNITY EXISTS? WHY IS THERE MERE EXIST SO OFFENSIVE THAT IT DRIVES THEM TO THIS POINT? WHY NOT COMPLETE A STUDY ON WHAT MAKES PEOPLE STRAIGHT? WHY NOT ATTEMPT TO TURN PEOPLE GAY TOO? JUST LEAVE PEOPLE ALONE.

they are endowed by their Creator with certain unalienable Rights, that among these are Life, Liberty and the pursuit of Happiness.

a self-evident proposition is one that is known to be true by understanding its meaning without proof.

Jim Burroway

September 19th, 2007

TO WHOEVER IS LEAVING MESSAGES IN ALL CAPS!!!

Please, take a breath, calm down. The shouting isn’t necessary.

I know ex-gay topics touch a very raw nerve among a lot of people. But please, try to be more succint in your comments. And please don’t repost entire large sections from other sources. Links and summaries are more than sufficient to make your point.

grantdale

September 19th, 2007

Thanks Jim — we could hear that person clearly, all the way across the Pacific. Good lord.

Anonymous person… you entirely miss the point. Prejudice, comes from pre (as in, before) judgement. Learn it, live it.

Apart from that, you are a complete goose — what makes you imagine that we think Jones, Yarhouse or Throckmorton are unbiased, legitimate researchers???

We’ve followed them for years. We know better.

But our opinion of them has been formed by their public anti-gay behaviour over the years, and not simply because they work at a conservative Christian college.

For all we know Jones, Yarhouse or Throckmorton could recant of their activities next week. Come out and condemn the abusive, deceptive exgay movement. Name who’s been pumping money into that movement and calling the shots. Be honest about what “change” means.

Sure, they’d all be unemployed about 10 minutes later but I’m equally sure each could again find work in a quiet, public community college someplace rural, teaching psych 101 or something.

Mark

September 19th, 2007

Wayne, I absolutely share many of your feelings towards these ex-gay groups, but please refrain from hinting that Warren is a sociopath. It’s not helpful to the discussion.

Joe Kort

September 20th, 2007

I still believe that if there are any successes in any of these so-called “sexual conversion therapies”, the men and women were *not* gay from the start.

Tonight I met with a client (Ted), of whom I have had hundreds like him, who was acting out homosexually from sexual abuse as a child and is in full recovery–not of homosexuality but of trauma and abuse.

Ted found guys through the internet that he could get to worship his muscular athletic body and fellate him. They would even kiss.

While many would say, “This guy is gay or at least bisexual”–he is neither.

We uncovered he was reenacting his childhood trauma and abuse.

As an adolescent an older man told Ted he had a great build for his age–which he did. The abuse went on for several years.

This client is looking for intimacy with men–which our society leaves little to no room for among men.

And Ted is not gay.

He is aroused by women, desires women, has sex with women and compulsively sexually acts out with men.

Yarhouse and his associates would take these straight men, label them as “homosexuals” struggling with “same sex attraction” and when they heal identity them as ex-gay.

It is not gay. I wish they would leave “gay” and “homosexual” out of their entire discussion.

Sexual orientation cannot change–ever!

quo mark II

September 21st, 2007

Grantdale, to put the matter very delicately, why do you think people should accept that you are unbiased?

Also, I’m not entirely sure I know what you mean by saying that someone is or is not a ‘legitimate’ researcher. The only point of interest is whether someone is a good, competent researcher or not. Is that what you mean?

grantdale

September 21st, 2007

quo mark II,

You need not be delicate with us, but thank-you for the concern.

We really don’t care if somebody would swear on their mother’s grave that they think we are biased. You’ll look in vain for a statement from us demanding to be called unbiased. Apart from that being somewhat of a futile demand, the charge itself is too easy too trump up in the first place.

Really not going to waste our breath on that sort of thing. Much.

And no, we did not intend to exclude questions of legitimacy. It’s a very different issue to that of bias. The question comes down to one of purpose and whether it contributes to pure knowledge.

Jim has provided a fantastic example of how to do illegitimate research. He’s put a lot of skilful effort in there… he’s a good researcher… but the outcome is thoroughly illegitimate. Only a loon would mistake Jim’s illustration for knowledge about heterosexuals. Only an evil loon would stand up in Court and wave Jim’s illustration around.

You’ll please note that we did not label Jones, Yarhouse or Throckmorton as “bad” or “incompetent”.

hope that helped.

quo mark II

September 21st, 2007

No, grantdale, that answer didn’t help much. There may be a difference between being an ‘illegitimate’ researcher and simply being a biased researcher, but you didn’t make the nature of the difference clear, at least not to me.

Also, I am left wondering why, if you consider accusations of bias too easy to trump up to be taken seriously, you nonetheless make them against others?

grantdale

September 21st, 2007

quo mark II

Whatever. I didn’t accuse them of bias, I said they were biased. Go back and see why I gave my opinion (and check if I was talking to any of them).

I’m not Wikipedia you know, I don’t do other people’s work for them… but…

Could you define pornography for me?

How does it differ to “marital relations”?

(leave any religious viewpoints out of it, that’ll help.)

Paul

January 17th, 2010

Ping back:

http://pennib.blogspot.com/2010/01/homosexuality-can-be-cured_16.html

Ben- shattered heart

November 5th, 2010

Why are we concern over whether a man who prefers other man can change to heterosexual. This makes no sense. Some people prefers apples over bananas but you don’t see a study or people trying to change their minds. My question is why does that concern us? To me people’s love life doesn’t effect other people. Thank for letting me share.

Ben

Priya Lynn

November 5th, 2010

Ben, its because many people believe that its wrong to be gay and if gays can change to heterosexual they should and they don’t deserve equal rights under the law.

Leave A Comment